An Overview of Toxicity Testing in Drug Discovery

Identifying viable lead compounds is a major milestone in drug discovery, but it is just the beginning of a long journey to verify efficacy and safety for treating human diseases. The pathway to an effective therapy runs through years of screening, animal testing and clinical trials before becoming available for public use.

A large fraction of candidates still fail at the level of human safety even after extensive animal testing. Among those that break through, there remains the challenge of tracking to detect the myriad potential latent or unanticipated side-effects that could emerge from long-term treatment. The recall of the heartburn drug Zantac and the anti-inflammatory Vioxx are visible examples of human toxicity that had eluded standard trials.

Systematic tests in mammals are costly and time consuming in part due to the need to separately test several organ systems, which only partially cover the potential range for toxicity.

Systematic analysis of pre-clinical trials revealed that mammalian testing is only able to predict human toxicity about 50% of the time1. Combining multiple animal models can increase predictivity to ~70%, but this comes at the cost of additional mammalian testing. Early screening in cultured human cells can narrow the field, or test specific modes of action, but cell cultures are prone to a high number of false positives and their chemical sensitivity translates poorly to animal systems.

However, using small animal models such as nematode worms or zebrafish as a bridge between cultured cells and mammals can reduce time and cost through early screening and elimination4. Additionally, early testing in the nematode Caenorhabditis elegans can also broaden the scope of testing to address neglected chemical libraries, multiple related compounds, and an expanded range of dosages. One can kill nematodes by feeding them any number of noxious chemicals, but how effectively can worms predict potential human toxicity of drug leads? Also, given that the dosage is often the difference between a remedy or a poison, how well does the dosage of a drug translate between worms and humans? If worms lack most of the organ systems that would be key targets of drug toxicity, then how do we test toxicity in organ systems that the worms don’t have? We will discuss how InVivo Biosystems addresses these questions when using C. elegans as a model for drug testing.

What makes an effective toxicity assay? Sensitivity vs. Selectivity

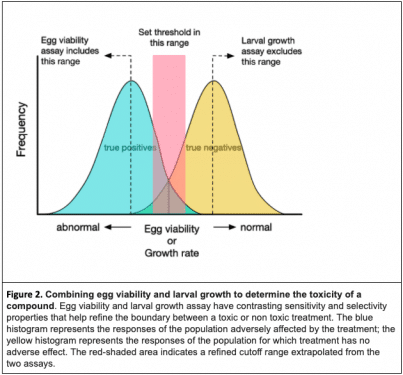

Predictive toxicology requires a balance between catching all the compounds that pose a hazard and excluding those that do not pose a risk. Suppose you are screening a panel of lead compounds and you want to eliminate those that are most likely to be toxic. The “sensitivity” of a test is the likelihood that a truly toxic compound will score as a positive hit by the test. Capturing all of the toxic compounds, however, will likely flag a large number of harmless ones raising the need for a test that is also selective.

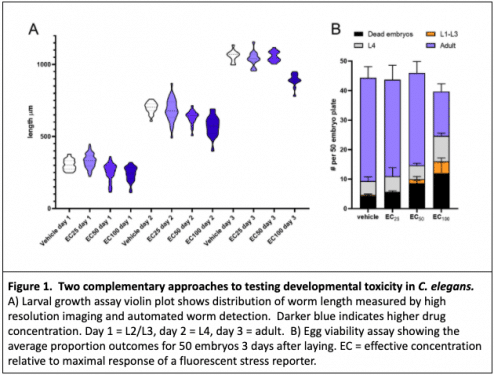

The ability of a test to exclude non-toxic compounds, or false positives, is known as the “selectivity.” Few assays alone dually provide the sensitivity and selectivity to exclusively identify the toxic treatments; however, deploying a strategic combination of assays can refine the pool to include most of the truly non-toxic compounds while excluding the majority of truly toxic ones. When testing drug toxicity and dosage in worms, we use multiple assays. Two complementary assays that we often deploy in tandem are the larval growth assay and the egg viability assay.

Larval Growth Assay: the ‘Sensitive’ Test

C. elegans has a deterministic developmental pattern in which nearly every adult cell has an defined lineage. The constraints of cell fate and a minimal pool of multipotent cells to compensate for distrubances make C. elegans a sensitive and powerful tool for studying the effects of developmental toxins. In a larval growth assay, we use a time course of high resolution images and automated object tracking to precisely measure the size of hundreds to thousands of worms over the course of development. This highly sensitive assay picks up minor perturbations in development, and we can be reasonably confident that negative-testing compounds or treatments will not adversely affect development in downstream assays or other systems.

Although the larval growth assay has been shown to predict potential mammalian toxins, it suffers from low specificity in that it also captures many otherwise benign compounds5. Therefore, we complement this approach with an egg viability assay, in which we measure the percentage of viable progeny produced by worms treated with the compound.

Egg Viability Assay: the ‘Selective’ Test

Nematodes are adapted to variable and sometimes harsh environments and their tough cuticle resists absorption of environmental toxins. Eggs are also resistant to external chemicals once their shells are formed. For most compounds, the route of exposure for developing embryos is via ingestion and metabolism by the mother. Compounds that result in egg lethality have a higher probability of being true positives and are more likely to be toxic in mammals.

In a large study testing 72 known developmental toxins for effects on egg viability in C. elegans, 89% of the compounds that reduced egg viability were also toxic in mammals6. Again, a single assay does not provide the complete picture as only 25% of those that did not reduce egg viability were compounds that had no developmental toxicity in mammals. This indicates that although the egg viability assay is a powerful positive predictor of toxicity, it is a weak negative predictor. The egg viability assay has very high selectivity, but low sensitivity.

By combining a highly sensitive larval growth assay with a highly selective egg viability assay, we can refine the pool of possible compounds or dosages to those least (or most) likely to be toxic (Figure 2). To achieve further refinement, C. elegans offers dozens of other assays ranging from simple manual tests to sophisticated high-content screens many of which can be tailored to assess perturbations of specific pathways. In the broader field, this approach can also be extended to designing a combination of cell culture, invertebrate and vertebrate model systems with optimal predictive power for drug toxicity.

Does Higher Toxicity in Worms Equal Higher Toxicity in Humans?

Drug development frequently involves starting with an initial lead compound and systematically testing a range of structurally related compounds or analogs. The utility of worms for drug toxicity testing is evident in the conservation of drug toxicity ranking. Within a list of compounds with different toxicities, the most toxic compounds in worms will also be the most toxic in mammals2,3,5. This is especially useful for screening classes of related compounds as an assay in C. elegans can quickly and inexpensively determine which among dozens of related compounds are least likely to have toxic effects6.

In addition to expanding the range of compounds tested, the throughput of C. elegans testing also expands the range for drug dosages that can be tested. One of the biggest limitations of mammalian toxicity testing is that the expense prohibits testing more than one or a few dosages for a compound. As almost any drug is toxic at a high enough dosage, selecting an ideal testing dosage for every individual lead is extremely impractical. Frequently, animals are treated either with the maximum tolerable dosage or a single arbitrary dosage across an entire panel.

Given the fractional cost and time of C. elegans testing relative to mammals, it is possible to test a wider range of dosages, enabling more thorough dose-response analysis. But how well does a dosage in worms translate to mammals? One key advantage of C. elegans testing is that worms rely on oral uptake of drugs similar to higher organisms. Cultured cells, by contrast, have notoriously unpredictable sensitivity to drugs. Although the absolute dosage that affects the worm does not translate to directly mammals nearly as well as the relative toxicity ranking, the dose response in worms can provide an informative reference dosage for subsequent mammalian testing that eclipses studies in cell culture. The short generation time of C. elegans also enables the testing of low doses over a lifetime or intergenerational effects.

How Do We Test For Toxicity To Organs That Worms Don’t Have?

How would one screen for cardiac toxicity when worms do not have a heart? Despite the lack of key organ systems, worms still possess nearly all of the key cellular pathways that operate in humans. For many pathways that operate in vertebrate-specific organ systems, worms possess a different or simplified output. The pathway could be thought of as the driveshaft on a tractor– it might drive a planter on one piece of farm equipment but drives a harvester on another. The core component is the same, but the outputs are different. We refer to the different outputs as “phenologs”—phenotypes that manifest differently in a model system despite sharing a common cellular mechanism with mammals or humans. Although worms do not possess a heart organ, they eat by rhythmic pumping of their pharynx, which is controlled by cellular mechanisms analogous to those regulating cardiac muscle in higher organisms. As the field of toxicology progresses from an observational to a more mechanistic discipline, deploying small animal models can accelerate the discovery of a drug or toxin’s mode of action.

Summary

Given that time and expenses are amplified with every drug candidate advanced through trials, early testing and removal of potentially toxic compounds is essential to controlling costs. No single model, mammalian or otherwise, has shown to be a consistent predictor of human toxicity. Using C. elegans studies in combination with other models such as cultured cells, zebrafish, or other small animals greatly increases the predictive power. A reliable predictive model would likely consist of a panel of assays spanning multiple species that optimizes sensitivity and selectivity. C. elegans has emerged as one of the more cost-effective components of such a predictive model, which could have considerable impact on the prioritization and costs of drug development.

InVivo Biosystems’ experienced team can take over and deliver the results you need through our C. elegans gene editing services. We welcome the opportunity to discuss your project with you.

Step into the forefront of drug discovery and development. Get in touch with us today and discover the power of our comprehensive services in advancing medical breakthroughs.

References

- Olson, H. et al. Concordance of the Toxicity of Pharmaceuticals in Humans and in Animals. Regulatory Toxicology and Pharmacology 32, 56–67 (2000).

- Ferguson, Boyer, M. S. & Sprando, R. L. A method for ranking compounds based on their relative toxicity using neural networking, C. elegans, axenic liquid culture, and the COPAS parameters TOF and EXT. OAB 139–6 (2010). doi:10.2147/OAB.S13466

- Li, Y. et al. Correlation of chemical acute toxicity between the nematode and the rodent. Toxicol. Res. 2, 403–10 (2013).

- Hunt, P. R. The C. elegans model in toxicity testing. J. Appl. Toxicol. 37, 50–59 (2016).

- Boyd, W. A. et al. Developmental Effects of the ToxCast™ Phase I and Phase II Chemicals in Caenorhabditis elegans and Corresponding Responses in Zebrafish, Rats, and Rabbits. Environmental Health Perspectives 124, 586–593 (2016).

- Harlow, P. H. et al. The nematode Caenorhabditis elegans as a tool to predict chemical activity on mammalian development and identify mechanisms influencing toxicological outcome. Sci Rep 1–13 (2016). doi:10.1038/srep22965